Unit - 3

Software Testing

Q1) What is software testing?

A1) Software Testing

Testing is the method of running a programme with the intention of discovering mistakes. It needs to be error-free to make our applications work well. It will delete all the errors from the programme if testing is performed successfully.

The method of identifying the accuracy and consistency of the software product and service under test is software testing. It was obviously born to verify whether the commodity meets the customer's specific prerequisites, needs, and expectations. At the end of the day, testing executes a system or programme to point out bugs, errors or faults for a particular end goal.

Software testing is a way of verifying whether the actual software product meets the expected specifications and ensuring that the software product is free of defects. It requires the execution of software/system components to test one or more properties of interest using manual or automated methods. In comparison to actual specifications, the aim of software testing is to find mistakes, gaps or missing requirements.

Some like to claim software testing as testing with a White Box and a Black Box. Software Testing, in simple words, means Program Verification Under Evaluation (AUT).

Q2) Write the software testing?

A2) Benefits of software testing

There are some pros of using software testing:

● Cost - effective: It is one of the main benefits of software checking. Testing every IT project on time allows you to save the money for the long term. It costs less to correct if the bugs were caught in the earlier stage of software testing.

● Security: The most vulnerable and responsive advantage of software testing is that people search for trustworthy products. It helps to eliminate threats and concerns sooner.

● Product - quality: It is a necessary prerequisite for any software product. Testing ensures that consumers receive a reliable product.

● Customer - satisfaction: The main goal of every product is to provide its consumers with satisfaction. The best user experience is assured by UI/UX Checking.

Q3) Describe white box testing?

A3) White box testing

● In this testing technique the internal logic of software components is tested.

● It is a test case design method that uses the control structure of the procedural design test cases.

● It is done in the early stages of software development.

● Using this testing technique software engineer can derive test cases that:

● All independent paths within a module have been exercised at least once.

● Exercise true and false both the paths of logical checking.

● Execute all the loops within their boundaries.

● Exercise internal data structures to ensure their validity.

Advantages:

● As the knowledge of internal coding structure is prerequisite, it becomes very easy to find out which type of input/data can help in testing the application effectively.

● The other advantage of white box testing is that it helps in optimizing the code.

● It helps in removing the extra lines of code, which can bring in hidden defects.

● We can test the structural logic of the software.

● Every statement is tested thoroughly.

● Forces test developers to reason carefully about implementation.

● Approximate the partitioning done by execution equivalence.

● Reveals errors in "hidden" code.

Disadvantages:

● It does not ensure that the user requirements are fulfilled.

● As knowledge of code and internal structure is a prerequisite, a skilled tester is needed to carry out this type of testing, which increases the cost.

● It is nearly impossible to look into every bit of code to find out hidden errors, which may create problems, resulting in failure of the application.

● The tests may not be applicable in a real world situation.

● Cases omitted in the code could be missed out.

Q4) Explain black box testing?

A4) Black box testing

It is also known as “behavioral testing” which focuses on the functional requirements of the software, and is performed at later stages of the testing process unlike white box which takes place at an early stage. Black-box testing aims at functional requirements for a program to derive sets of input conditions which should be tested. Black box is not an alternative to white-box, rather, it is a complementary approach to find out a different class of errors other than white-box testing.

Black-box testing is emphasizing on different set of errors which falls under following categories:

- Incorrect or missing functions

- Interface errors

- Errors in data structures or external database access

- Behavior or performance errors

- Initialization and termination errors.

Boundary value analysis: The input is divided into higher and lower end values. If these values pass the test, it is assumed that all values in between may pass too.

Equivalence class testing: The input is divided into similar classes. If one element of a class passes the test, it is assumed that all the class is passed.

Decision table testing: Decision table technique is one of the widely used case design techniques for black box testing. This is a systematic approach where various input combinations and their respective system behavior are captured in a tabular form. That’s why it is also known as a cause-effect table.

This technique is used to pick the test cases in a systematic manner; it saves the testing time and gives good coverage to the testing area of the software application. Decision table technique is appropriate for the functions that have a logical relationship between two and more than two inputs.

Advantages:

● More effective on larger units of code than glass box testing.

● Testers need no knowledge of implementation, including specific programming languages.

● Testers and programmers are independent of each other.

● Tests are done from a user's point of view.

● Will help to expose any ambiguities or inconsistencies in the specifications.

● Test cases can be designed as soon as the specifications are complete.

Disadvantages:

● Only a small number of possible inputs can actually be tested, to test every possible input stream would take nearly forever.

● Without clear and concise specifications, test cases are hard to design.

● There may be unnecessary repetition of test inputs if the tester is not informed of test cases the programmer has already tried.

● May leave many program paths untested.

● Cannot be directed toward specific segments of code which may be very complex (and therefore more error prone).

● Most testing related research has been directed toward glass box testing.

Q5) Write the difference between black box and white box testing?

A5) Difference between Black box and White box testing

White box testing | Black box testing |

It is mainly performed by developers of applications. | It is often performed by testers of apps. |

Implementation expertise is required. | No implementation experience is required. |

That is the inner or internal software testing. | This can be referred to as outer or external software testing. |

It's a structural software evaluation. | It is a practical device evaluation. |

Having awareness of programming is compulsory. | No programming awareness is required. |

It is the logic testing of the software. | It is the behavior testing of the software. |

In general, it is applicable to the lower software testing stages. | It is applicable to higher stages of software testing. |

Sometimes called clear box testing. | Sometimes called closed testing. |

It is a software testing process in which the tester has knowledge of the software's internal structure or code or programme. | It is a software testing approach in which the internal structure or programme or code is secret and nothing about it is known. |

Types of White Box Testing: Path Testing Loop Testing Condition testing | Types of Black Box Testing: Functional Testing Non-functional testing Regression Testing |

Q6) Write about software test strategies?

A6) Software Testing is a method of assessing a software application's performance to detect any software bugs. It checks whether the developed software meets the stated specifications and, in order to produce a better product, identifies any defects in the software.

In order to find any holes, errors or incomplete specifications contrary to the real requirements, this is effectively executing a method. It is also defined as a software product verification and validation process.

To ensure that every component, as well as the entire system, works without breaking down, an effective software testing or QA strategy involves testing at all technology stack levels. Some of the Techniques for Software Testing include:

Leave time for fixing: When problems are identified, it is necessary to fix the time for the developers to solve the problems. Often, the business also needs time to retest the fixes.

Discourage passing the buck: You need to build a community that will allow them to jump on the phone or have desk-side talk to get to the bottom of stuff if you want to eliminate back and forth interactions between developers and testers. Everything about teamwork is checking and repairing.

Manual testing has to be exploratory: If any problem can be written down or scripted in specific terms, it could be automated and it belongs to the automated test suite. The software's real-world use will not be programmed, and without a guide, the testers need to break stuff.

Encourage clarity: You need to create a bug report that does not create any uncertainty but offers clarification. It is also necessary for a developer, however, to step out of the way to interact effectively as well.

Test often: This helps to avoid the build-up and crushing of morale from massive backlogs of issues. The safest method is known to be frequent checking.

Q7) Describe verification and validation?

A7) Verification and Validation

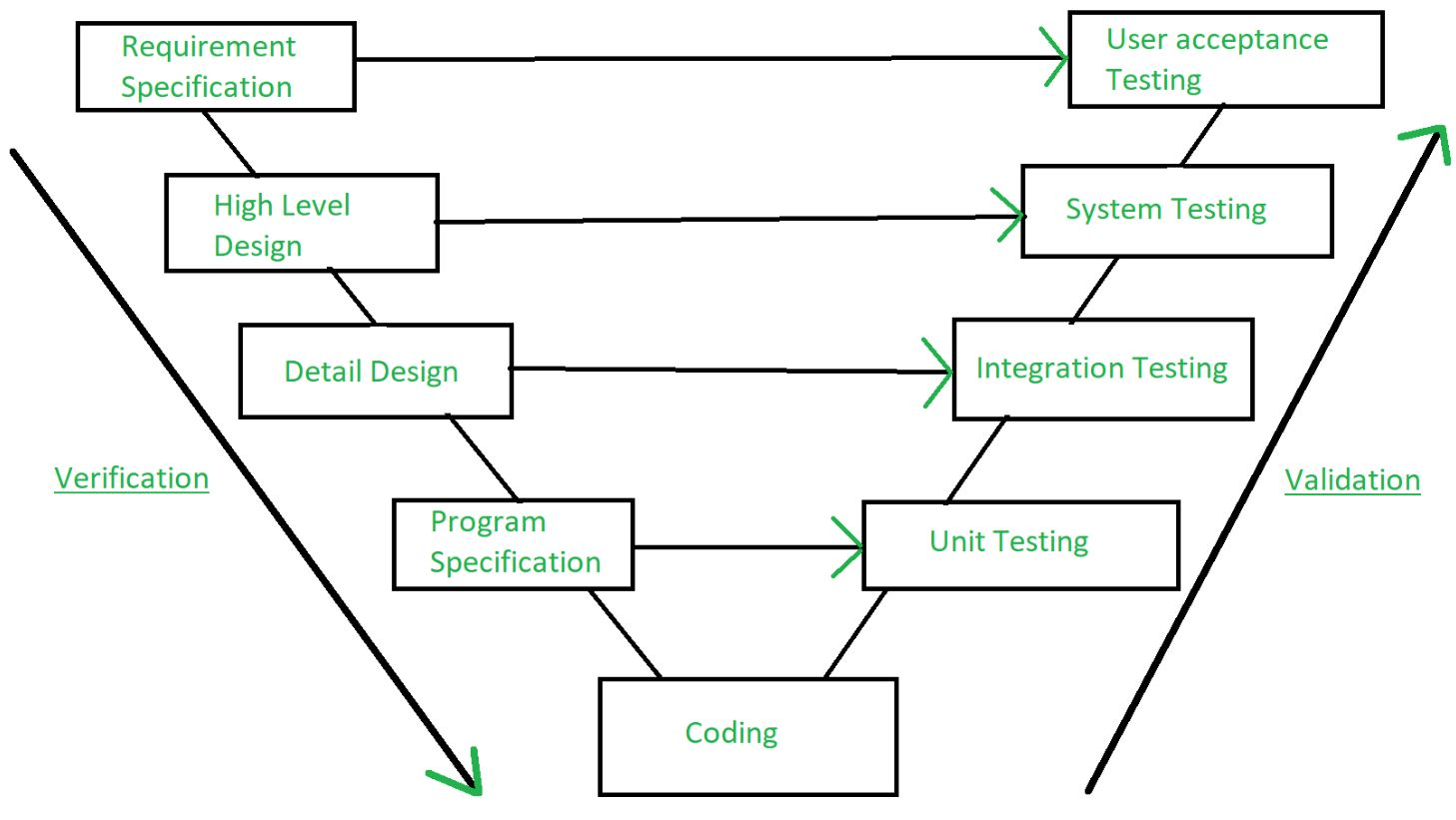

Verification and validation are the processes of investigating whether a software system meets requirements and specifications and fulfills the function necessary.

Barry Boehm defines verification and validation -

Verification: Are we building the product right?

Validation: Are we building the right product?

Fig 1: Verification and validation

Verification:

Verification is the method of testing without any bugs that a program achieves its target. It is the method of ensuring whether or not the product that is produced is right. This tests whether the established product meets the specifications we have. Verification is Static Testing.

Verification tasks involved:

- Inspections

- Reviews

- Walkthroughs

- Desk-checking

Validation: Validation is the process of testing whether the software product is up to the mark or, in other words, the specifications of the product are high. It is the method of testing product validation, i.e. it checks that the correct product is what we are producing. Validation of the real and expected product is necessary.

Validation is the Dynamic Testing

Validation tasks involved:

- Black box testing

- White-box testing

- Unit testing

- Integration testing

Q8) Explain system testing?

A8) System testing

Software is the only one element of a larger computer-based system. Ultimately, software is incorporated with other system elements (e.g., hardware, people, information), and a series of system integration and validation tests are conducted. These tests fall outside the scope of the software process and are not conducted solely by software engineers. However, steps taken during software design and testing can greatly improve the probability of successful software integration in the larger system.

A classic system testing problem is "finger-pointing." This occurs when an error is uncovered, and each system element developer blames the other for the problem. Rather than indulging in such nonsense, the software engineer should anticipate potential interfacing problems and

- Design error-handling paths that test all information coming from other elements of the system,

- Conduct a series of tests that simulate bad data or other potential errors at the software interface,

- Record the results of tests to use as "evidence" if finger-pointing does occur, and

- Participate in planning and design of system tests to ensure that software is adequately tested.

System testing is actually a series of different tests whose primary purpose is to fully exercise the computer-based system. Although each test has a different purpose, all work to verify that system elements have been properly integrated and perform allocated functions.

Q9) Describe unit testing?

A9) Unit testing

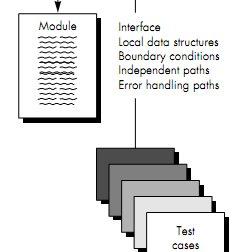

Unit testing focuses verification effort on the smallest unit of software design—the software component or module. Using the component- level design description as a guide, important control paths are tested to uncover errors within the boundary of the module. The relative complexity of tests and uncovered errors is limited by the constrained scope established for unit testing. The unit test is white-box oriented, and the step can be conducted in parallel for multiple components.

Unit Test Considerations

The tests that occur as part of unit tests are illustrated schematically in Figure below. The module interface is tested to ensure that information properly flows into and out of the program unit under test. The local data structure is examined to ensure that data stored temporarily maintains its integrity during all steps in an algorithm's execution. Boundary conditions are tested to ensure that the module operates properly at boundaries established to limit or restrict processing. All independent paths (basis paths) through the control structure are exercised to ensure that all statements in a module have been executed at least once. And finally, all error handling paths are tested.

Fig 2: Unit Test

Tests of data flow across a module interface are required before any other test is initiated. If data does not enter and exit properly, all other tests are moot. In addition, local data structures should be exercised and the local impact on global data should be ascertained (if possible) during unit testing.

Selective testing of execution paths is an essential task during the unit test. Test cases should be designed to uncover errors due to erroneous computations, incorrect comparisons, or improper control flow. Basis path and loop testing are effective techniques for uncovering a broad array of path errors.

Among the more common errors in computation are

● Misunderstood or incorrect arithmetic precedence,

● Mixed mode operations,

● Incorrect initialization,

● Precision inaccuracy,

● Incorrect symbolic representation of an expression.

Comparison and control flow are closely coupled to one another (i.e., change of flow frequently occurs after a comparison). Test cases should uncover errors such as

- Comparison of different data types,

- Incorrect logical operators or precedence,

- Expectation of equality when precision error makes equality unlikely,

- Incorrect comparison of variables,

- Improper or nonexistent loop termination,

- Failure to exit when divergent iteration is encountered, and

- Improperly modified loop variables.

Among the potential errors that should be tested when error handling is evaluated are

● Error description is unintelligible.

● Error noted does not correspond to error encountered.

● Error condition causes system intervention prior to error handling.

● Exception-condition processing is incorrect.

● Error description does not provide enough information to assist in the location of the cause of the error.

Boundary testing is the last (and probably most important) task of the unit test step. Software often fails at its boundaries. That is, errors often occur when the nth element of an n-dimensional array is processed, when the ith repetition of a loop with i passes is invoked, when the maximum or minimum allowable value is encountered. Test cases that exercise data structure, control flow, and data values just below, at, and just above maxima and minima are very likely to uncover errors.

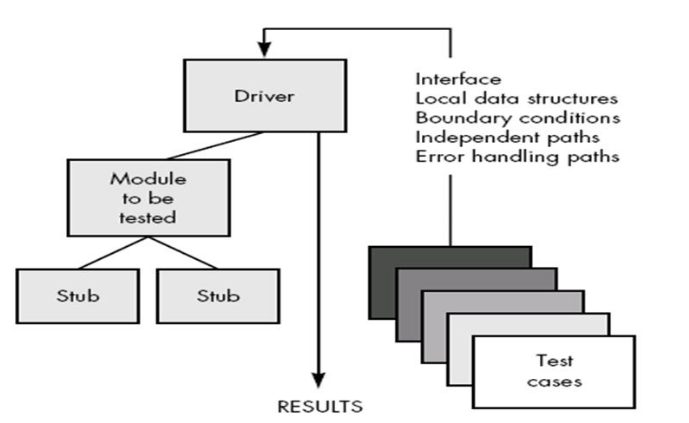

Unit Test Procedures

Unit testing is normally considered as an adjunct to the coding step. After source level code has been developed, reviewed, and verified for correspondence to component level design, unit test case design begins. A review of design information provides guidance for establishing test cases that are likely to uncover errors in each of the categories discussed earlier. Each test case should be coupled with a set of expected results.

Fig 3: Unit Test Environment

Because a component is not a stand-alone program, driver and/or stub software must be developed for each unit test. The unit test environment is illustrated in Figure above. In most applications a driver is nothing more than a "main program" that accepts test case data, passes such data to the component (to be tested), and prints relevant results. Stubs serve to replace modules that are subordinate (called by) the component to be tested.

A stub or "dummy subprogram" uses the subordinate module's interface, may do minimal data manipulation, prints verification of entry, and returns control to the module undergoing testing. Drivers and stubs represent overhead. That is, both are software that must be written (formal design is not commonly applied) but that is not delivered with the final software product. If drivers and stubs are kept simple, actual overhead is relatively low. Unfortunately, many components cannot be adequately unit tested with "simple" overhead software. In such cases, complete testing can be postponed until the integration test step (where drivers or stubs are also used).

Unit testing is simplified when a component with high cohesion is designed. When only one function is addressed by a component, the number of test cases is reduced and errors can be more easily predicted and uncovered.

Q10) Write the advantages and disadvantages of unit testing?

A10) Advantage of Unit Testing

● Can be applied directly to object code and does not require processing source code.

● Performance profilers commonly implement this measure.

Disadvantages of Unit Testing

● Insensitive to some control structures (number of iterations)

● Does not report whether loops reach their termination condition

● Statement coverage is completely insensitive to the logical operators (|| and &&).

Q11) What is integration testing?

A11) Integration testing

Integration testing is a systematic technique for constructing the program structure while at the same time conducting tests to uncover errors associated with interfacing. The objective is to take unit tested components and build a program structure that has been dictated by design.

There is often a tendency to attempt non incremental integration; that is, to construct the program using a "big bang" approach. All components are combined in advance. The entire program is tested as a whole. And chaos usually results! A set of errors is encountered.

Correction is difficult because isolation of causes is complicated by the vast expanse of the entire program. Once these errors are corrected, new ones appear and the process continues in a seemingly endless loop.

Incremental integration is the antithesis of the big bang approach. The program is constructed and tested in small increments, where errors are easier to isolate and correct; interfaces are more likely to be tested completely; and a systematic test approach may be applied.

Q12) define top down integration?

A12) Top-down Integration

Top-down integration testing is an incremental approach to construction of program structure. Modules are integrated by moving downward through the control hierarchy, beginning with the main control module (main program). Modules subordinate (and ultimately subordinate) to the main control module are incorporated into the structure in either a depth-first or breadth-first manner.

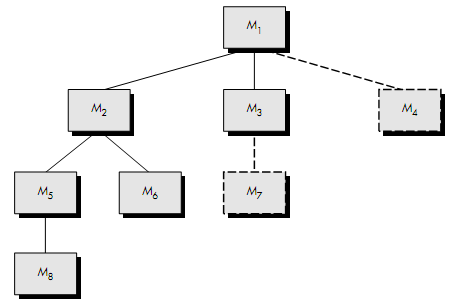

Fig 4: Top down integration

Referring to Figure above, depth-first integration would integrate all components on a major control path of the structure. Selection of a major path is somewhat arbitrary and depends on application-specific characteristics. For example, selecting the left-hand path, components M1, M2, M5 would be integrated first. Next, M8 or (if necessary for proper functioning of M2) M6 would be integrated.

Then, the central and right-hand control paths are built. Breadth-first integration incorporates all components directly subordinate at each level, moving across the structure horizontally. From the figure, components M2, M3, and M4 (a replacement for stub S4) would be integrated first. The next control level, M5, M6, and so on, follows.

The integration process is performed in a series of five steps:

- The main control module is used as a test driver and stubs are substituted for all components directly subordinate to the main control module.

- Depending on the integration approach selected (i.e., depth or breadth first), subordinate stubs are replaced one at a time with actual components.

- Tests are conducted as each component is integrated.

- On completion of each set of tests, another stub is replaced with the real component.

- Regression testing may be conducted to ensure that new errors have not been introduced. The process continues from step 2 until the entire program structure is built.

The top-down integration strategy verifies major control or decision points early in the test process. In a well-factored program structure, decision making occurs at upper levels in the hierarchy and is therefore encountered first. If major control problems do exist, early recognition is essential. If depth-first integration is selected, a complete function of the software may be implemented and demonstrated.

For example, consider a classic transaction structure in which a complex series of interactive inputs is requested, acquired, and validated via an incoming path. The incoming path may be integrated in a top-down manner. All input processing (for subsequent transaction dispatching) may be demonstrated before other elements of the structure have been integrated. Early demonstration of functional capability is a confidence builder for both the developer and the customer.

Top-down strategy sounds relatively uncomplicated, but in practice, logistical problems can arise. The most common of these problems occurs when processing at low levels in the hierarchy is required to adequately test upper levels. Stubs replace low-level modules at the beginning of top-down testing; therefore, no significant data can flow upward in the program structure. The tester is left with three choices:

- Delay many tests until stubs are replaced with actual modules,

- Develop stubs that perform limited functions that simulate the actual module, or

- Integrate the software from the bottom of the hierarchy upward.

The first approach (delay tests until stubs are replaced by actual modules) causes us to loose some control over correspondence between specific tests and incorporation of specific modules. This can lead to difficulty in determining the cause of errors and tends to violate the highly constrained nature of the top-down approach. The second approach is workable but can lead to significant overhead, as stubs become more and more complex.

Q13) Explain bottom up integration?

A13) Bottom-up Integration

Bottom-up integration testing, as its name implies, begins construction and testing with atomic modules (i.e., components at the lowest levels in the program structure). Because components are integrated from the bottom up, processing required for components subordinate to a given level is always available and the need for stubs is eliminated.

A bottom-up integration strategy may be implemented with the following steps:

- Low-level components are combined into clusters (sometimes called builds) that perform a specific software sub-function.

- A driver (a control program for testing) is written to coordinate test case input and output.

- The cluster is tested.

- Drivers are removed and clusters are combined moving upward in the program structure.

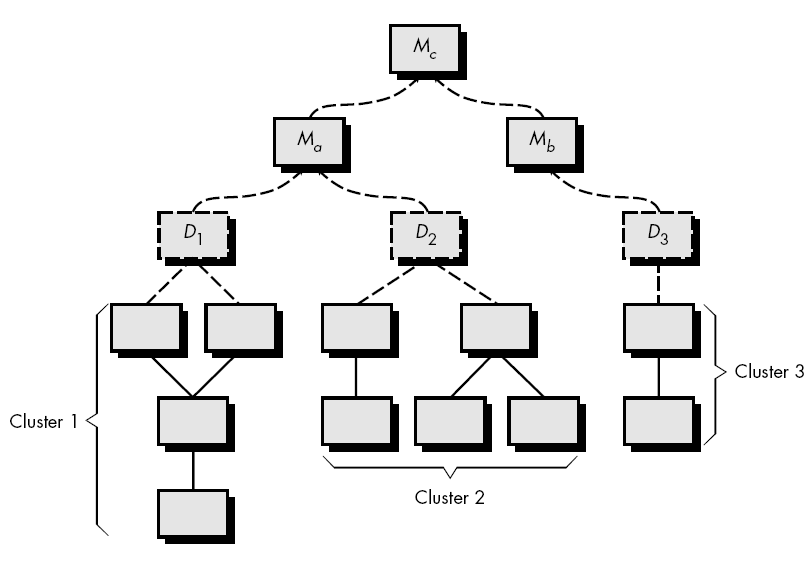

Fig 5: Bottom up integration

Integration follows the pattern illustrated in Figure above. Components are combined to form clusters 1, 2, and 3. Each of the clusters is tested using a driver (shown as a dashed block). Components in clusters 1 and 2 are subordinate to Ma. Drivers D1 and D2 are removed and the clusters are interfaced directly to Ma. Similarly, driver D3 for cluster 3 is removed prior to integration with module Mb. Both Ma and Mb will ultimately be integrated with component Mc, and so forth.

As integration moves upward, the need for separate test drivers lessens. In fact, if the top two levels of program structure are integrated top down, the number of drivers can be reduced substantially and integration of clusters is greatly simplified.

Q14) Describe debugging?

A14) Debugging

Software testing is a process that can be systematically planned and specified. Test case design can be conducted, a strategy can be defined, and results can be evaluated against prescribed expectations.

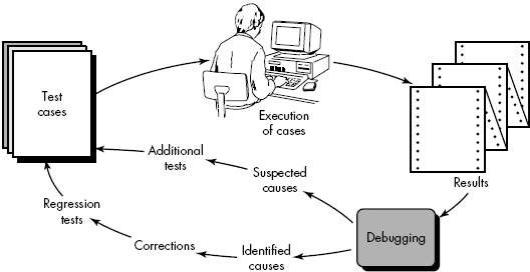

Debugging occurs as a consequence of successful testing. That is, when a test case uncovers an error, debugging is the process that results in the removal of the error. Although debugging can and should be an orderly process, it is still very much an art. A software engineer, evaluating the results of a test, is often confronted with a "symptomatic" indication of a software problem. That is, the external manifestation of the error and the internal cause of the error may have no obvious relationship to one another. The poorly understood mental process that connects a symptom to a cause is debugging.

The Debugging Process

Debugging is not testing but always occurs as a consequence of testing. Referring to Figure above, the debugging process begins with the execution of a test case. Results are assessed and a lack of correspondence between expected and actual performance is encountered. In many cases, the non corresponding data are a symptom of an underlying cause as yet hidden. The debugging process attempts to match symptom with cause, thereby leading to error correction.

Fig 6: Debugging Process

The debugging process will always have one of two outcomes:

● The cause will be found and corrected, or

● The cause will not be found.

In the latter case, the person performing debugging may suspect a cause, design a test case to help validate that suspicion, and work toward error correction in an iterative fashion.

During debugging, we encounter errors that range from mildly annoying (e.g., an incorrect output format) to catastrophic (e.g. The system fails, causing serious economic or physical damage). As the consequences of an error increase, the amount of pressure to find the cause also increases. Often, pressure sometimes forces a software developer to fix one error and at the same time introduce two more.

Debugging Approaches

In general, three categories for debugging approaches may be proposed:

● Brute force,

● Backtracking, and

● Cause elimination.

▪ The brute force category of debugging is probably the most common and least efficient method for isolating the cause of a software error. We apply brute force debugging methods when all else fails. Using a "let the computer find the error" philosophy, memory dumps are taken, run-time traces are invoked, and the program is loaded with WRITE statements. We hope that somewhere in the morass of information that is produced we will find a clue that can lead us to the cause of an error. Although the mass of information produced may ultimately lead to success, it more frequently leads to wasted effort and time. Thought must be expended first!

▪ Backtracking is a fairly common debugging approach that can be used successfully in small programs. Beginning at the site where a symptom has been uncovered, the source code is traced backward (manually) until the site of the cause is found. Unfortunately, as the number of source lines increases, the number of potential backward paths may become unmanageably large.

▪ The third approach to debugging – Cause elimination—is manifested by induction or deduction and introduces the concept of binary partitioning. Data related to the error occurrence are organized to isolate potential causes. A "cause hypothesis" is devised and the aforementioned data are used to prove or disprove the hypothesis. Alternatively, a list of all possible causes is developed and tests are conducted to eliminate each. If initial tests indicate that a particular cause hypothesis shows promise, data are refined in an attempt to isolate the bug.

Q15) Write short notes on debugging technique?

A15) Debugging technique

Debugging is the method of repairing a bug in the programme in the sense of software engineering. In other words, it applies to error detection, examination and elimination. This operation starts after the programme fails to function properly and ends by fixing the issue and checking the software successfully. As errors need to be fixed at all levels of debugging, it is considered to be an extremely complex and repetitive process.

Debugging process

Steps that are involved in debugging include:

● Identification of issues and preparation of reports.

● Assigning the software engineer's report to the defect to verify that it is true.

● Defect Detection using modelling, documentation, candidate defect finding and checking, etc.

● Defect Resolution by having the device modifications required.

● Corrections validation.

Debugging strategies -

- To grasp the system, research the system for the longer term. Depending on the need, it helps debuggers create various representations of systems to be debugged. System analysis is also carried out actively to detect recent improvements made to the programme.

- Backward analysis of the issue that includes monitoring the software backwards from the fault message position to locate the region of defective code. A thorough region study is conducted to determine the cause of defects.

- Forward programme analysis includes monitoring the programme forward using breakpoints or print statements and studying the outcomes at various points in the programme. In order to locate the flaw, the region where the wrong outputs are obtained is the region that needs to be centred.

- Using previous software debugging experience, the software has similar problems in nature. This approach's effectiveness depends on the debugger's expertise.

Q16) Write the difference between top-down and bottom-up integration testing?

A16) Difference between Top-down and Bottom-up Integration Testing

Top-down Testing | Bottom-up Testing |

Top Down Integration testing is one of the approaches of Integration testing in which integration testing takes place from top to bottom means system integration begins with top level modules. | Bottom Up Integration testing is one of the approaches of Integration testing in which integration testing takes place from bottom to top means system integration begins with lowest level modules. |

It works on big to small components | It works on small to big components. |

It is implemented in Structure/procedure-oriented programming languages. | It is implemented in Object-oriented programming languages. |

In this approach Stub modules must be produced. | In this approach Driver modules must be produced. |

The complexity of this testing is simple. | The complexity of this testing is complex and highly data intensive. |

Top Down Integration testing approach is beneficial if the significant defect occurs toward the top of the program. | Bottom Up Integration testing approach is beneficial if the crucial flaws encountered towards the bottom of the program. |

Q17) What is the difference between integration and system testing?

A17) Difference between system testing and integration testing

System Testing | Integration Testing |

In system testing, we check the system as a whole. | In integration testing, we check the interfacing between the inter-connected components. |

It is carried out for performing both functional and non-functional testing(performance, usability, etc). | It is generally limited to functional aspects of the integrated components. |

The different types of system testing are- Functional testing, Performance testing, Usability testing, Reliability testing, Security testing, Scalability testing, Installation testing, etc. | The different approaches of performing integration testing namely – Top-down, bottom-up, big-bang and hybrid integration. |

It is performed after integration testing. | It is performed after unit testing. |

Since the testing is limited to the evaluation of functional requirements, hence, it includes black-box testing techniques only. | Since the interfacing logic is required to perform this testing, hence, it requires white/grey box testing techniques along with black-box techniques. |

Q18) What is Software maintenance?

A18) Software maintenance

Software maintenance is a part of the Software Development Life Cycle. Its primary goal is to modify and update software application after delivery to correct errors and to improve performance. Software is a model of the real world. When the real world changes, the software require alteration wherever possible.

Software Maintenance is an inclusive activity that includes error corrections, enhancement of capabilities, deletion of obsolete capabilities, and optimization.

a) Corrective Maintenance

Corrective maintenance aims to correct any remaining errors regardless of where they may cause specifications, design, coding, testing, and documentation, etc.

b) Adaptive Maintenance

It contains modifying the software to match changes in the ever-changing environment.

c) Preventive Maintenance

It is the process by which we prevent our system from being obsolete. It involves the concept of reengineering & reverse engineering in which an old system with old technology is re-engineered using new technology. This maintenance prevents the system from dying out.

d) Perfective Maintenance

It defines improving processing efficiency or performance or restricting the software to enhance changeability. This may contain enhancement of existing system functionality, improvement in computational efficiency, etc.